Notebooks Execution Overview

When Losant executes your notebook, we run your Jupyter Notebook within a Docker container on a private virtual machine against input data from various sources including devices and data tables.

Execution can be triggered in two different ways, either manually or in a workflow. In order to do either you need to have created your Notebook Inputs and you have uploaded your Notebook File. Optionally, if you would like any custom outputs from a notebook you may configure your Notebook Outputs.

Post Execution Status

After execution has finished you will see your notebook has one of the following statuses ...

- completed: your notebook successfully ran.

- timeout: your notebook ran out of time.

- errored: your notebook threw an error.

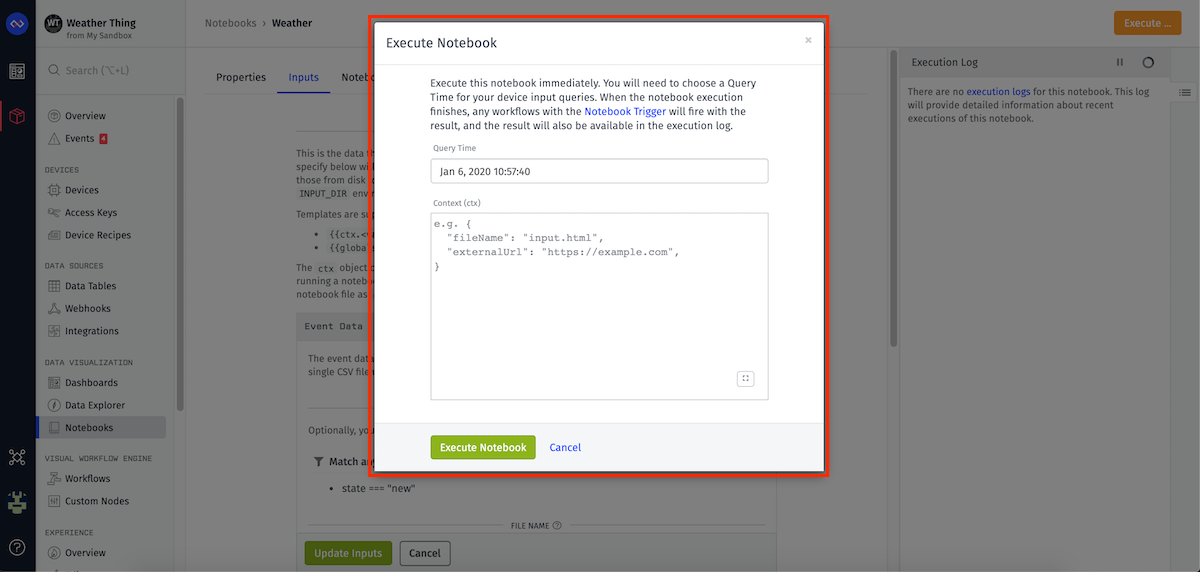

Manual Execution

To execute your notebook immediately click the button in the top right hand corner of the notebooks overview page. A modal will pop up and you may pick the Query Time used to generate your input files. Then this will enqueue one notebook execution.

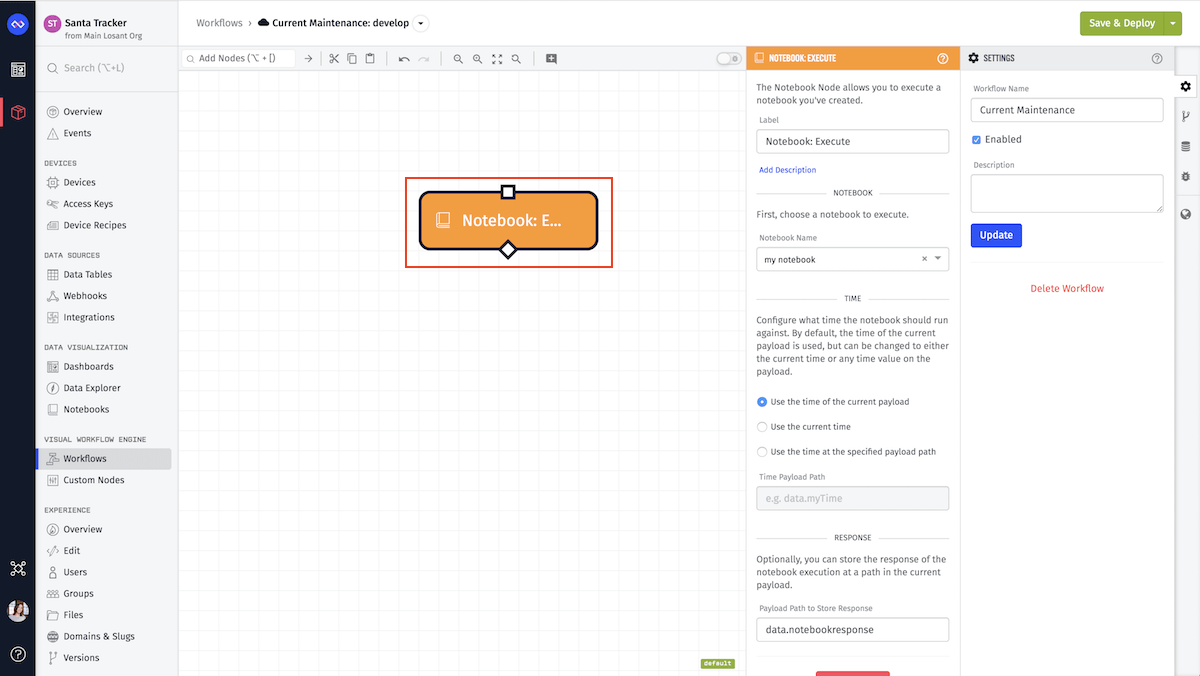

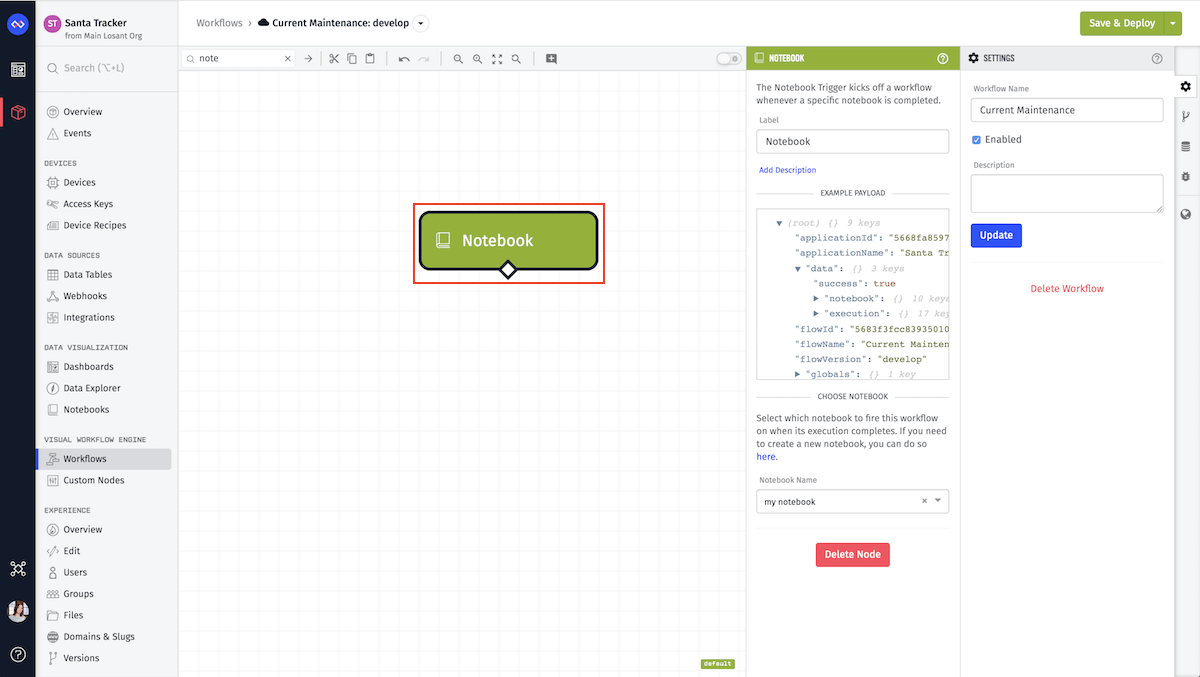

Workflow Execution

There are two workflow nodes that can either enqueue a notebook to be executed or can trigger a workflow to run when a notebook has completed executing.

Notebook Execution Node

The Notebook Execution Node is a tool to enqueue a specific notebook that has been already defined in your application.

Notebook Trigger

The Notebook Trigger will execute when the given notebook has finished. This allows you to write logic to download the results, or send an email or text message notification to your team to let them know the execution of the notebook is complete. This node will not only trigger a workflow on success but trigger when the notebook throws an error or has run out of run time.

Canceling an Execution

You can cancel a notebook execution that is in progress from the notebook's execution log, which can be found on the notebook's "Properties" tab. When a notebook is executing, the log item will have an option to "Cancel Execution".

After confirming the cancellation, any in-progress notebook executions will be immediately canceled.

Considerations

When utilizing this resource take into consideration that you will be limited on run time. Run time is the time when your notebook starts running until it stops running. The setup and tear down of your virtual machine are not included in the time. The run time limits for notebook execution are account dependent.

For example, if you are a sandbox user, by default you can only run one notebook at a time, each notebook is allocated at most five minutes of run time, and you are limited to 60 minutes of run time per usage period. Thus if one notebook takes longer than fives minutes to complete your notebook will timeout when executing. This particular user can run as few as 12 notebooks per usage period if each notebook took the entire five minutes to run.

Execution Environment

Once the execution begins, a private virtual machine (VM) is spun up just for your notebook. Then, your inputs are downloaded on to the VM. Next within a Docker container your notebook is executed using nbconvert.

Within your Docker container you have access to your input directory and your output directory, but you do not have network access.

Once your notebook has either completed, errored or timed-out, we send all outputs into our storage and save them for seven days. Then the virtual machine is destroyed, along with any outputs from your notebook that you did not specify in your outputs configuration. After seven days your outputs will be removed from our storage. If you would like to keep them longer, you may move them into your Application's Files in your output configuration.

After execution, the VM is destroyed, along with any outputs from your notebook that you did not specify in your outputs configuration.

To utilize the most out of notebooks, you can request your input data and you can pull the Docker image that your notebook will be running in on Losant. This will give you the ability to test your notebook in the same environment it's run in.

Supported Versions

Library versions will vary depending on your selected image version. View a full list of supported libraries.

Was this page helpful?

Still looking for help? You can also search the Losant Forums or submit your question there.